Advanced deploys (Blue/green, Canary, Traffic vectoring)

Description

Today, GitLab CI believes that deploys are binary, all-or-nothing affairs. But some teams use blue/green deploys, canary deploys, and traffic vectoring to reduce risk during deploys. This can actually take many shapes as people interpret the idea differently to suit their needs.

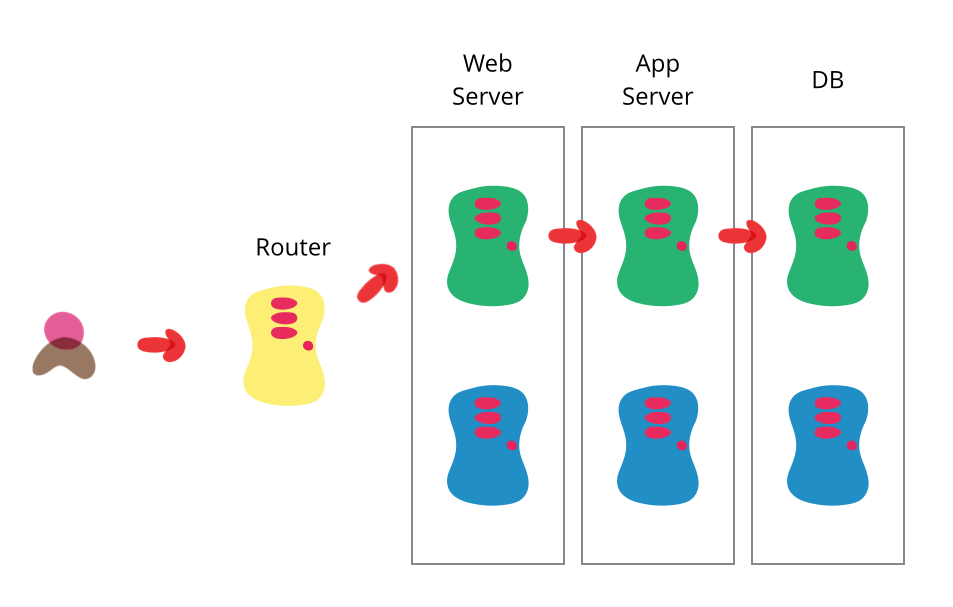

Blue/green deploys Create two (nearly) identical environments, arbitrarily called blue and green. One isn't better than the other, just separate.

With all traffic going to green, load new code onto blue, get it ready, and then switch the router to suddenly, as quickly as possible, send all new traffic to blue.

Canary deploys, incremental rollouts, traffic vectoring Similar to blue/green deploys, you have a new version of code running at the same time as old code, but instead of cutting over 100% in one moment, you send a portion of traffic to the new version. This can be done in several ways:

- Given X instances/containers, load the new code on a portion of the instances/containers.

- or Set the router to send a percent of traffic to the new instances/containers running the new code (traffic ventoring).

The first is easier conceptually and fits well with Docker workflows where it's easy to destroy and create new containers running new images. The second is easier when you have stable instances, are willing to allocate double capacity, or when instance granularity is too large. e.g. if you only have 2 instances in production, the minimum granularity is 1 instance, which is 50% of your capacity. If you want only 10% of traffic to test the new changes, you could use a third instance and send 10% of the total traffic there.

Often when people refer to canary deploys, it's a fixed, small percentage of instances/containers/traffic. Like 10%. Or maybe just 1 instance. But it's possible to extrapolate from there to make an incremental rollout where you start with a small canary deploy, then increase the cutoff gradually, say from 1% to 10% to 25% to 50%, until finally reaching 100%. If at any point, a problem is detected, it should be easy to revert back to 0% to fallback to the old code.

Proposal

Links / references

- Redhat demo of blue-green: https://youtu.be/ooA6FmTL4Dk?t=15m53s

- Redhat demo of canary: https://youtu.be/ooA6FmTL4Dk?t=36m50s

- Kubernetes Deployment Strategies : https://www.weave.works/blog/kubernetes-deployment-strategies